The Problems with the Kappa Statistic as a Metric of Interobserver Agreement on Lesion Detection Using a Third-reader Approach When Locations Are Not Prespecified - Academic Radiology

Inter-observer agreement and reliability assessment for observational studies of clinical work - ScienceDirect

References in Inter- and intraobserver agreement on the Load Sharing Classification of thoracolumbar spine fractures - Injury

Training Effect on the Inter-observer Agreement in Endoscopic Diagnosis and Grading of Atrophic Gastritis according to Level of Endoscopic Experience. - Abstract - Europe PMC

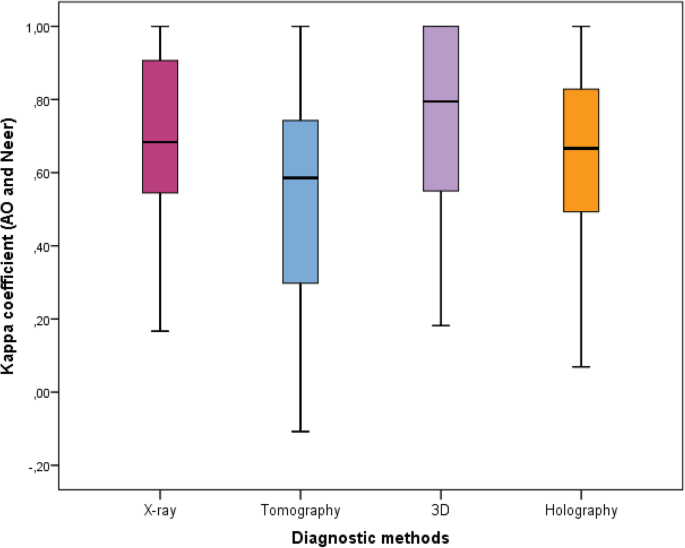

Inter-observer reliability of alternative diagnostic methods for proximal humerus fractures: a comparison between attending surgeons and orthopedic residents in training | Patient Safety in Surgery | Full Text

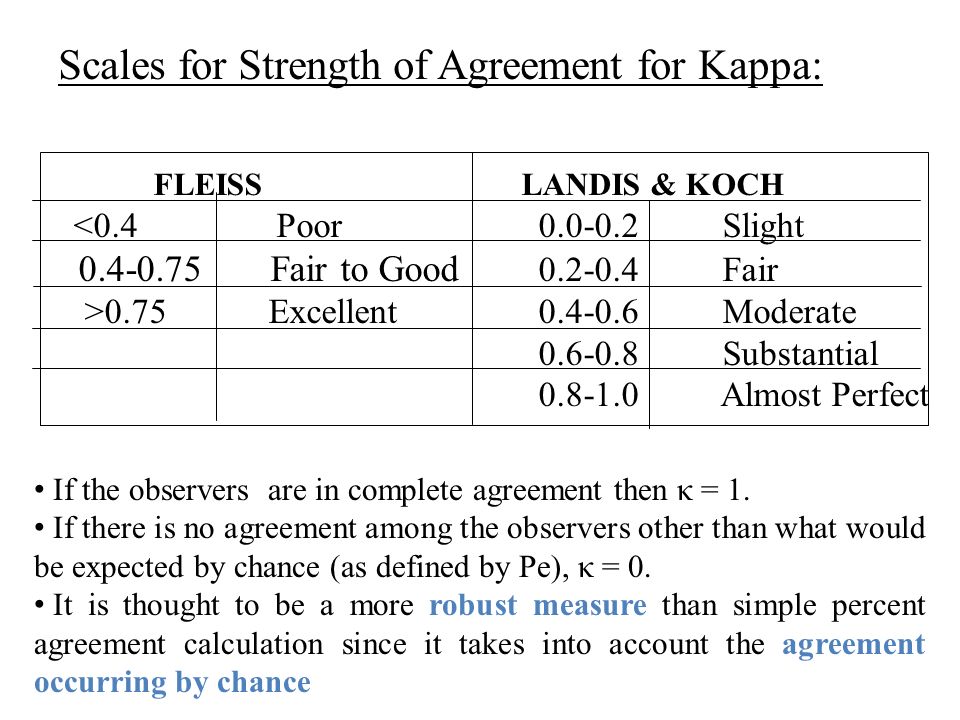

Interpretation of Kappa Values. The kappa statistic is frequently used… | by Yingting Sherry Chen | Towards Data Science

Inter-observer variation in the interpretation of chest radiographs for pneumonia in community-acquired lower respiratory tract infections - Clinical Radiology

![PDF] Inter-observer reliability and intra-observer reproducibility of the Weber classification of ankle fractures. | Semantic Scholar PDF] Inter-observer reliability and intra-observer reproducibility of the Weber classification of ankle fractures. | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/bc547fe9ee8afbb62eb879dcb736a7698df53ddf/2-TableI-1.png)

PDF] Inter-observer reliability and intra-observer reproducibility of the Weber classification of ankle fractures. | Semantic Scholar

Inter-observer agreement (Kappa statistic). 1, 2, 3: Observers; ALL:... | Download Scientific Diagram

Inter-observer variation can be measured in any situation in which two or more independent observers are evaluating the same thing Kappa is intended to. - ppt download

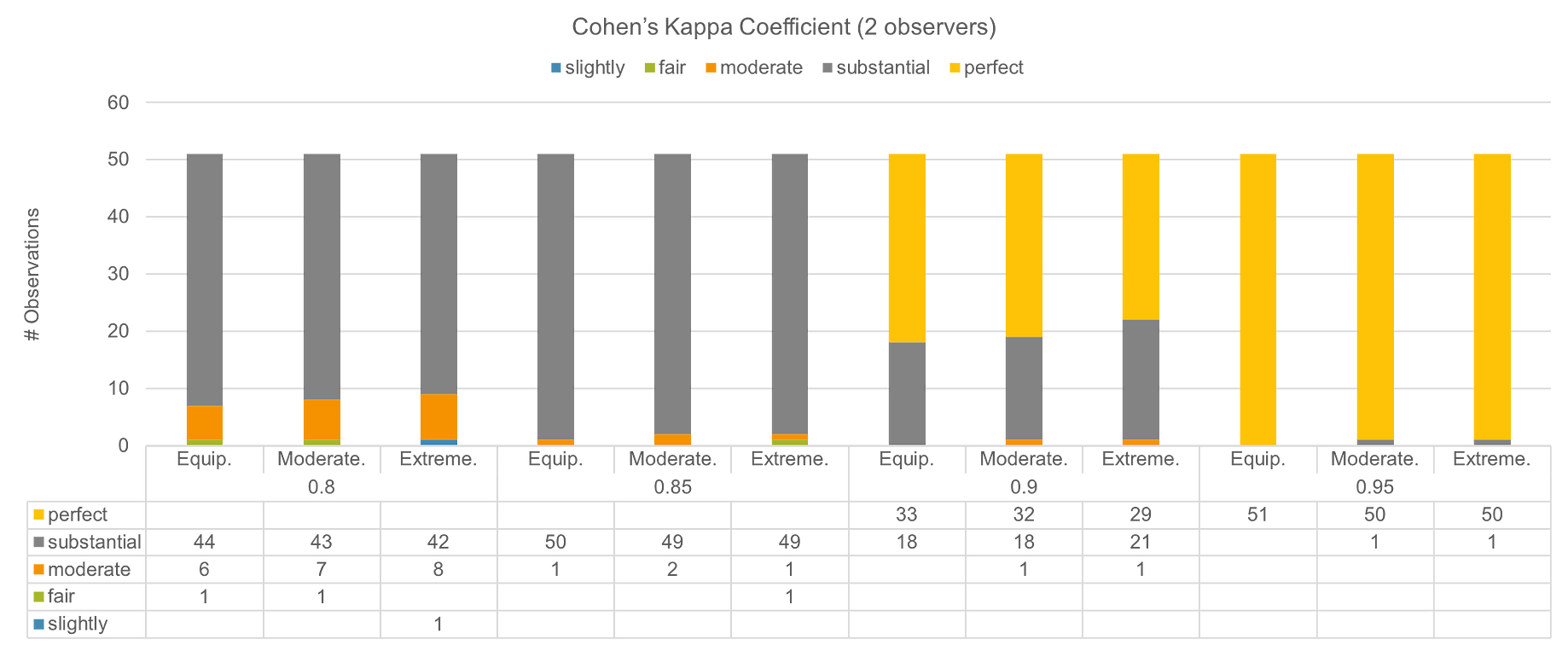

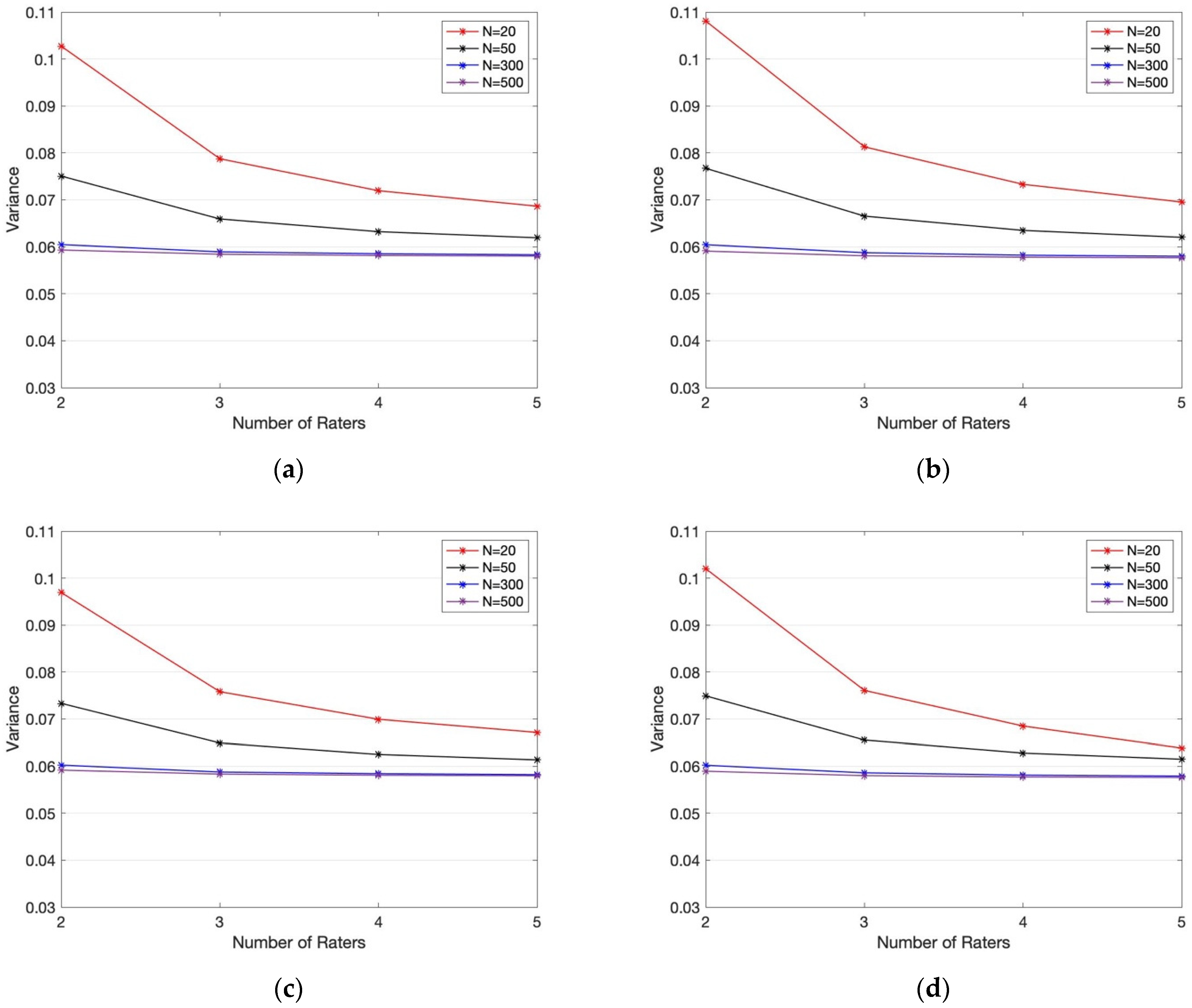

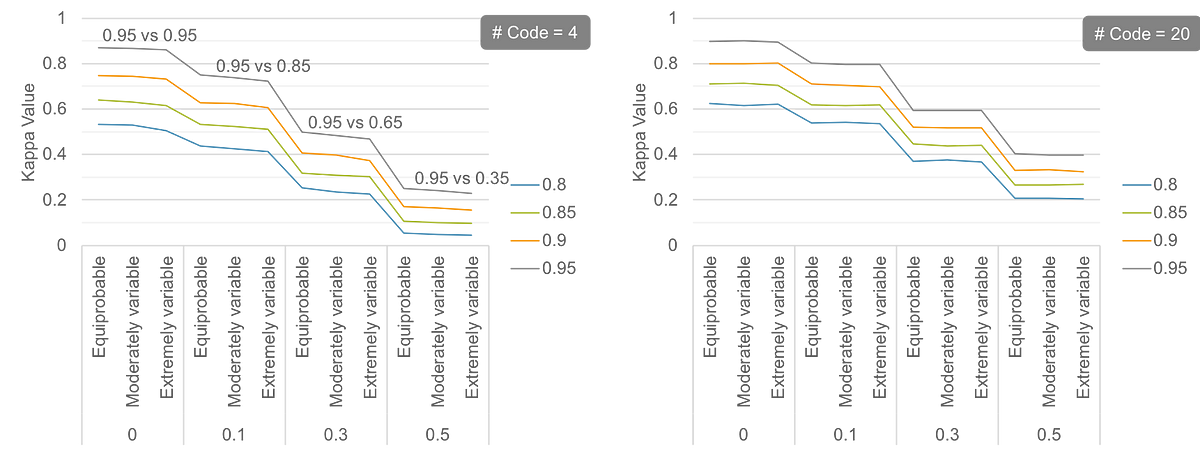

Symmetry | Free Full-Text | An Empirical Comparative Assessment of Inter-Rater Agreement of Binary Outcomes and Multiple Raters | HTML

Inter-observer variation in the histopathology reports of head and neck melanoma; a comparison between the seventh and eighth edition of the AJCC staging system - European Journal of Surgical Oncology

Intra and Interobserver Reliability and Agreement of Semiquantitative Vertebral Fracture Assessment on Chest Computed Tomography

Interpretation of Kappa Values. The kappa statistic is frequently used… | by Yingting Sherry Chen | Towards Data Science

Symmetry | Free Full-Text | An Empirical Comparative Assessment of Inter-Rater Agreement of Binary Outcomes and Multiple Raters | HTML

Inter-observer variation can be measured in any situation in which two or more independent observers are evaluating the same thing Kappa is intended to. - ppt download

![PDF] Understanding interobserver agreement: the kappa statistic. | Semantic Scholar PDF] Understanding interobserver agreement: the kappa statistic. | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/e45dcfc0a65096bdc5b19d00e4243df089b19579/2-Table1-1.png)