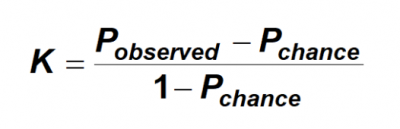

Inter-Annotator Agreement: An Introduction to Cohen's Kappa Statistic | by Surge AI | Dec, 2021 | Medium

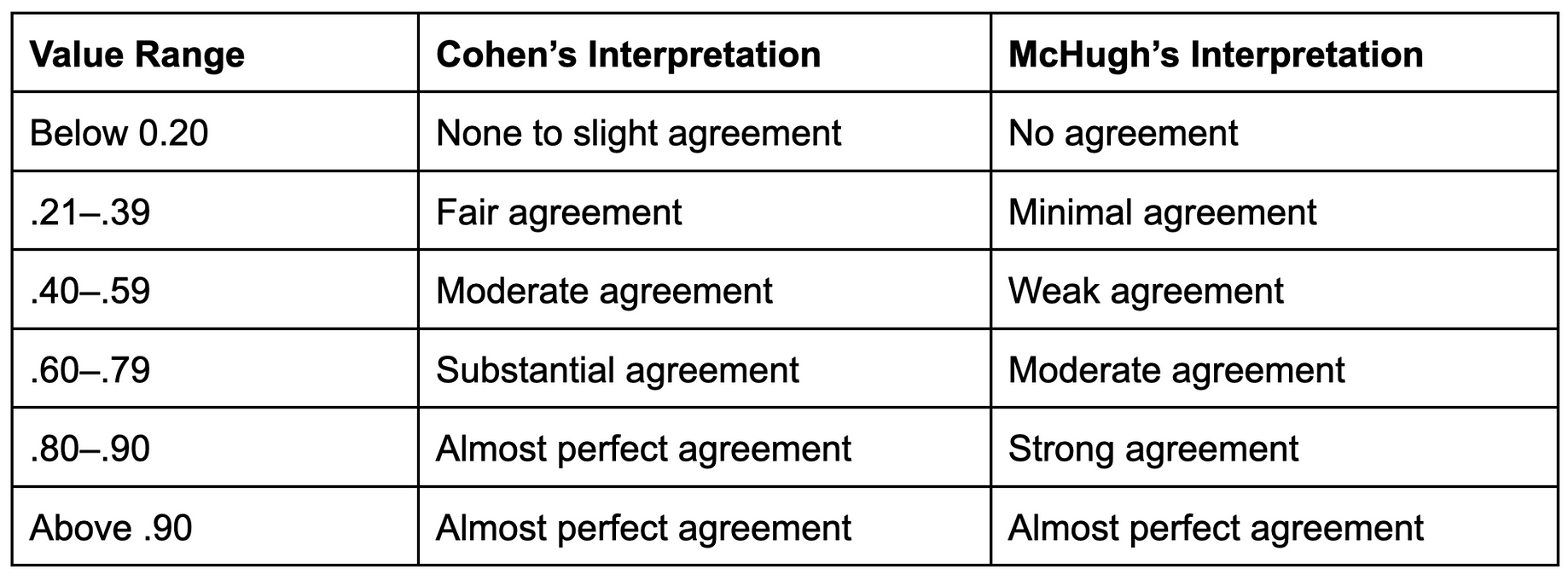

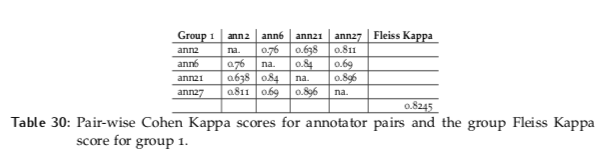

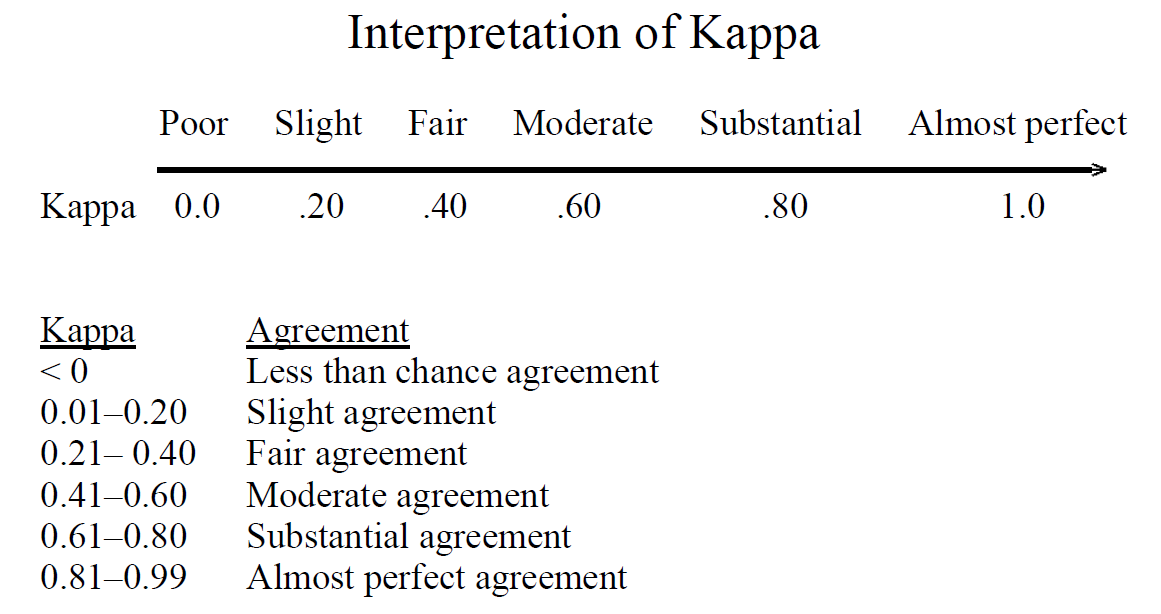

Inter-Annotator Agreement (IAA). Pair-wise Cohen kappa and group Fleiss'… | by Louis de Bruijn | Towards Data Science

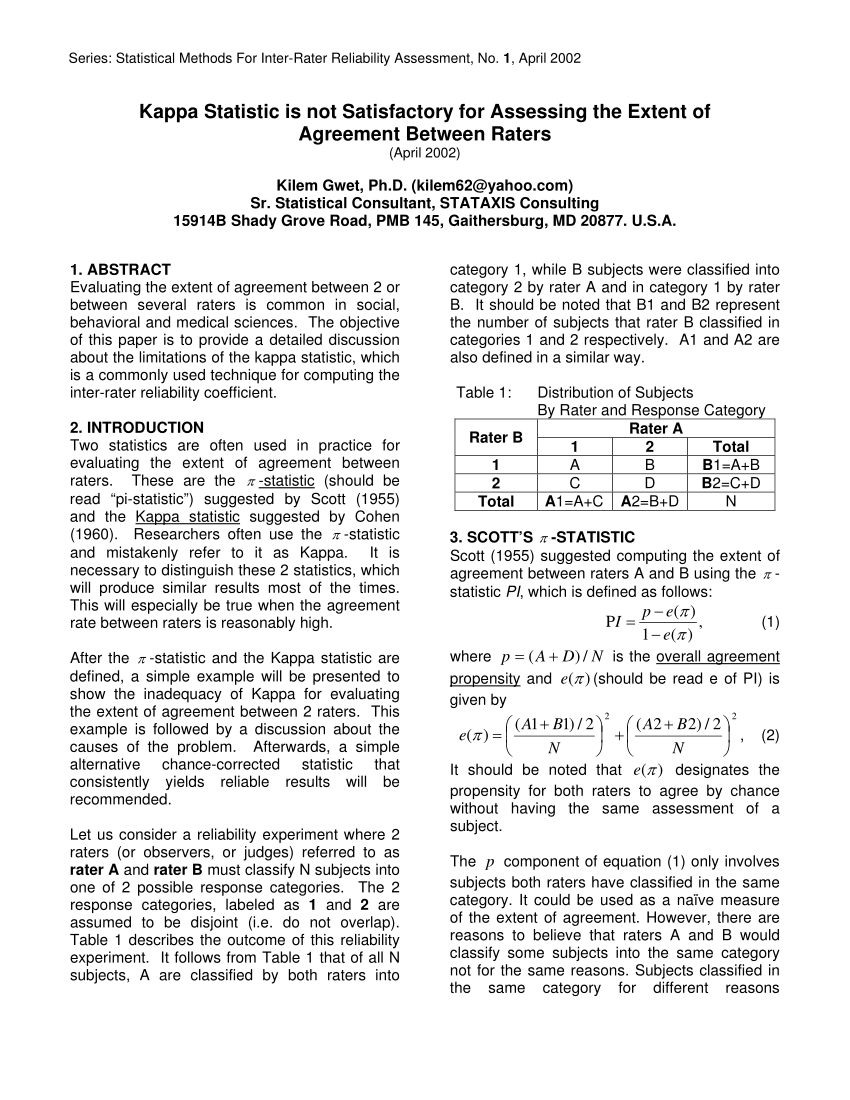

Kappa Test For Agreement Between Two Raters | PDF | Statistical Hypothesis Testing | Type I And Type Ii Errors

Inter-rater agreement Kappas. a.k.a. inter-rater reliability or… | by Amir Ziai | Towards Data Science

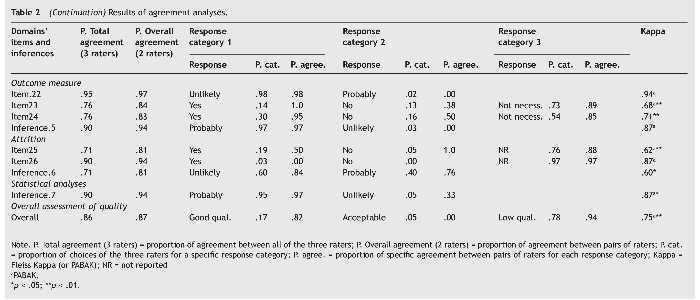

Q-Coh: A tool to screen the methodological quality of cohort studies in systematic reviews and meta-analyses | International Journal of Clinical and Health Psychology