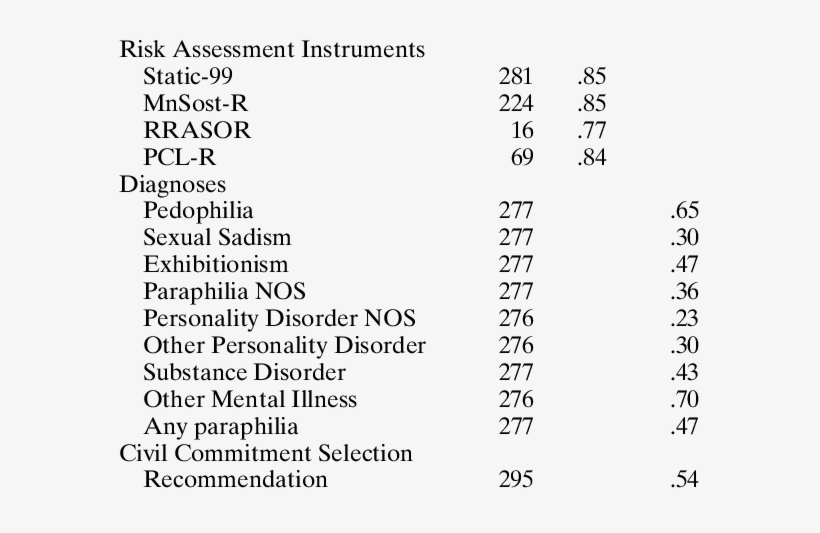

![Statistics Part 15] Measuring agreement between assessment techniques: Intraclass correlation coefficient, Cohen's Kappa, R-squared value – Data Lab Bangladesh Statistics Part 15] Measuring agreement between assessment techniques: Intraclass correlation coefficient, Cohen's Kappa, R-squared value – Data Lab Bangladesh](https://datalabbd.com/wp-content/uploads/2019/06/15a-1.png)

Statistics Part 15] Measuring agreement between assessment techniques: Intraclass correlation coefficient, Cohen's Kappa, R-squared value – Data Lab Bangladesh

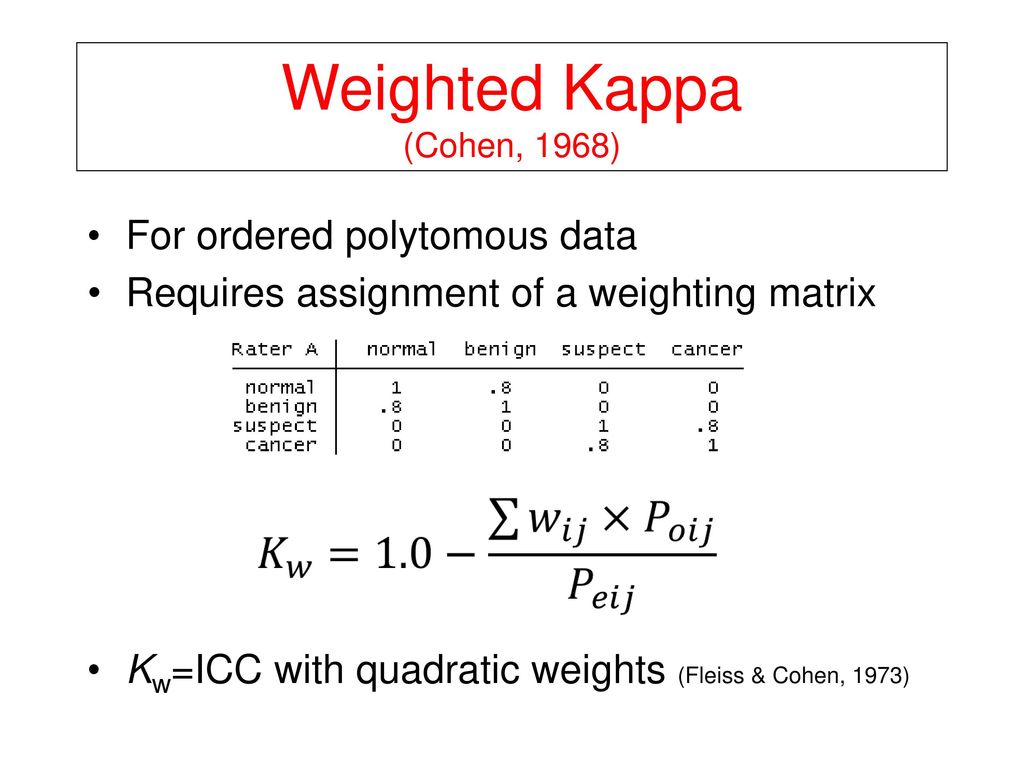

PLOS ONE: Standardization for Ki-67 Assessment in Moderately Differentiated Breast Cancer. A Retrospective Analysis of the SAKK 28/12 Study

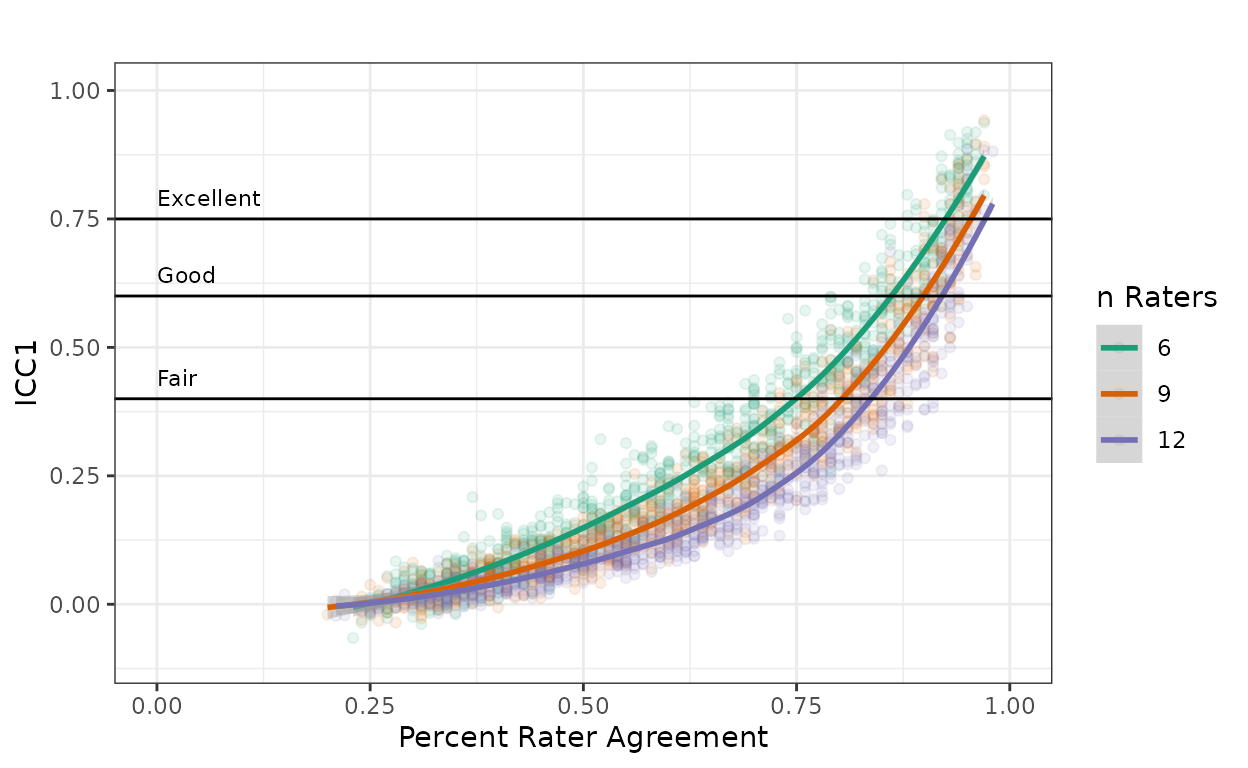

![Statistics Part 15] Measuring agreement between assessment techniques: Intraclass correlation coefficient, Cohen's Kappa, R-squared value – Data Lab Bangladesh Statistics Part 15] Measuring agreement between assessment techniques: Intraclass correlation coefficient, Cohen's Kappa, R-squared value – Data Lab Bangladesh](https://datalabbd.com/wp-content/uploads/2019/06/15c-1.png)

Statistics Part 15] Measuring agreement between assessment techniques: Intraclass correlation coefficient, Cohen's Kappa, R-squared value – Data Lab Bangladesh

![07.03 - Personal webpages at .>2 raters Fleiss’ kappa ICC Kendall’s coefficient of concordance - [PDF Document] 07.03 - Personal webpages at .>2 raters Fleiss’ kappa ICC Kendall’s coefficient of concordance - [PDF Document]](https://demo.fdocuments.in/img/378x509/reader019/reader/2020041707/5ba7a95709d3f2592c8b88fc/r-1.jpg)